How telecom deepfake audio fraud detection equips call center agents with the awareness, protocols, and escalation paths to identify AI-generated voice impersonation during high-value account changes and financial transactions.

Deepfake audio technology has reached a level of sophistication where AI-generated voice can convincingly impersonate a specific individual in real-time conversation. For telecom call centers, this means that the traditional method of authenticating a caller by recognizing their voice or verifying that they sound like the account holder is no longer reliable. A fraudster equipped with a deepfake voice model can call to authorize a SIM swap, approve an account change, or redirect billing, sounding exactly like the real subscriber.

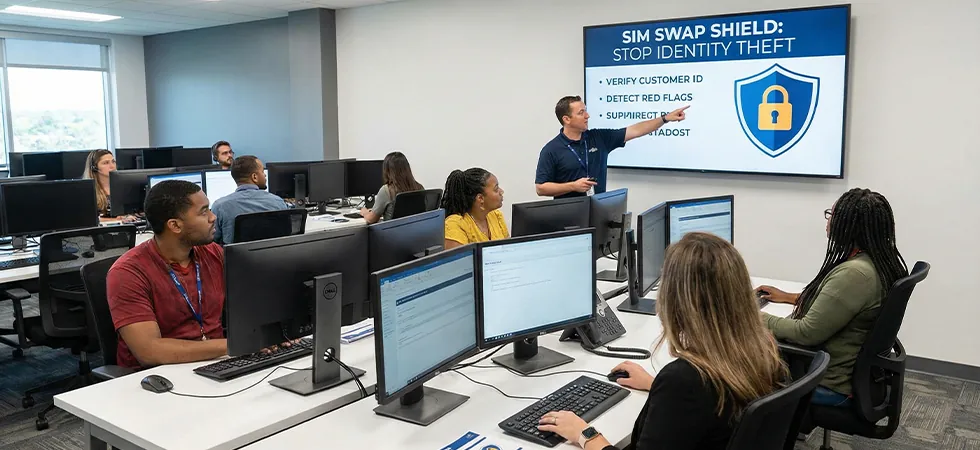

Telecom deepfake audio fraud detectionis not primarily a technology deployment. It is an agent training and protocol challenge. Agents must understand that voice itself is no longer proof of identity, follow enhanced verification protocols for high-value interactions, recognize the behavioral cues that distinguish real callers from AI-driven impersonations, and escalate suspicious interactions to specialized fraud teams. Sequential Tech trains agents on this new threat landscape and deploys the human awareness layer that complements voice authentication technology.

Why Agents Are the Critical Detection Layer

Voice biometric systems can detect some deepfakes by analyzing audio artifacts invisible to the human ear. But these systems are not infallible; deepfake technologyimproves faster than detection technology, and the arms race heavily favors attackers. The agent on the call is the second detection layer: noticing conversational anomalies, asking unexpected questions that require genuine knowledge, and recognizing when a caller’s behavior does not match the established pattern for that account.

Deepfake Behavioral Indicators and Agent Detection Techniques

|

Behavioral Indicator |

Why Deepfakes Exhibit This |

Agent Detection Technique |

Escalation Trigger |

|---|---|---|---|

|

Slight response delay |

Real-time deepfake processing adds 200–500ms latency |

Agent notes unusual pauses before responses, especially to unexpected questions |

Consistent delay pattern across conversation flags for enhanced verification |

|

Inability to handle spontaneous questions |

AI model trained on scripted scenarios struggles with off-script prompts |

Agent asks unexpected personal question not related to account (e.g., “How’s the weather where you are?”) |

Evasive or generic responses to spontaneous questions trigger escalation |

|

Emotional flatness |

Deepfake models often lack natural emotional variation |

Agent notes whether caller shows natural frustration, humor, or concern |

Robotically even tone throughout high-stress interaction flags for review |

|

Overly cooperative behavior |

Real callers push back, negotiate, or express confusion; deepfakes are programmed to comply |

Agent assesses whether caller is unusually agreeable to all verification steps |

Zero pushback on inconvenient verification requests is itself suspicious |

|

Background audio inconsistency |

Deepfake audio sometimes lacks natural environment sounds or has synthetic artifacts |

Agent listens for unnatural silence, repetitive background, or audio quality shifts |

Sterile audio environment on supposedly mobile call triggers investigation |

Enhanced Verification Protocols for High-Value Interactions

Telecom deepfake audio fraud detectionmandates enhanced verification for any interaction that modifies account security (SIM swaps, password resets, authorized user changes) or involves financial transactions (billing redirects, equipment purchases, plan upgrades above a threshold). Enhanced verification goes beyond standard knowledge-based questions to include callback to the number on file, out-of-band confirmation via the carrier’s app, or in-store identity presentation for the highest-risk changes.

“Voice is no longer identity. The agent who assumes the caller is genuine because they sound right is the agent who will process a deepfake-driven SIM swap. The trained agent treats every high-value interaction with healthy skepticism and follows enhanced verification regardless of how convincing the caller sounds.” — Voice Security Threat Report, 2026

TRAIN YOUR AGENTS FOR THE DEEPFAKE ERA

Sequential Tech’s agents are trained to detect deepfake behavioral indicators, follow enhanced verification protocols, and escalate suspicious voice interactions before high-value fraud is completed.